Good AI governance is practical, proportionate, and built to last. Our approach reflects that in everything we do

Layered governance

What does governance need to cover?

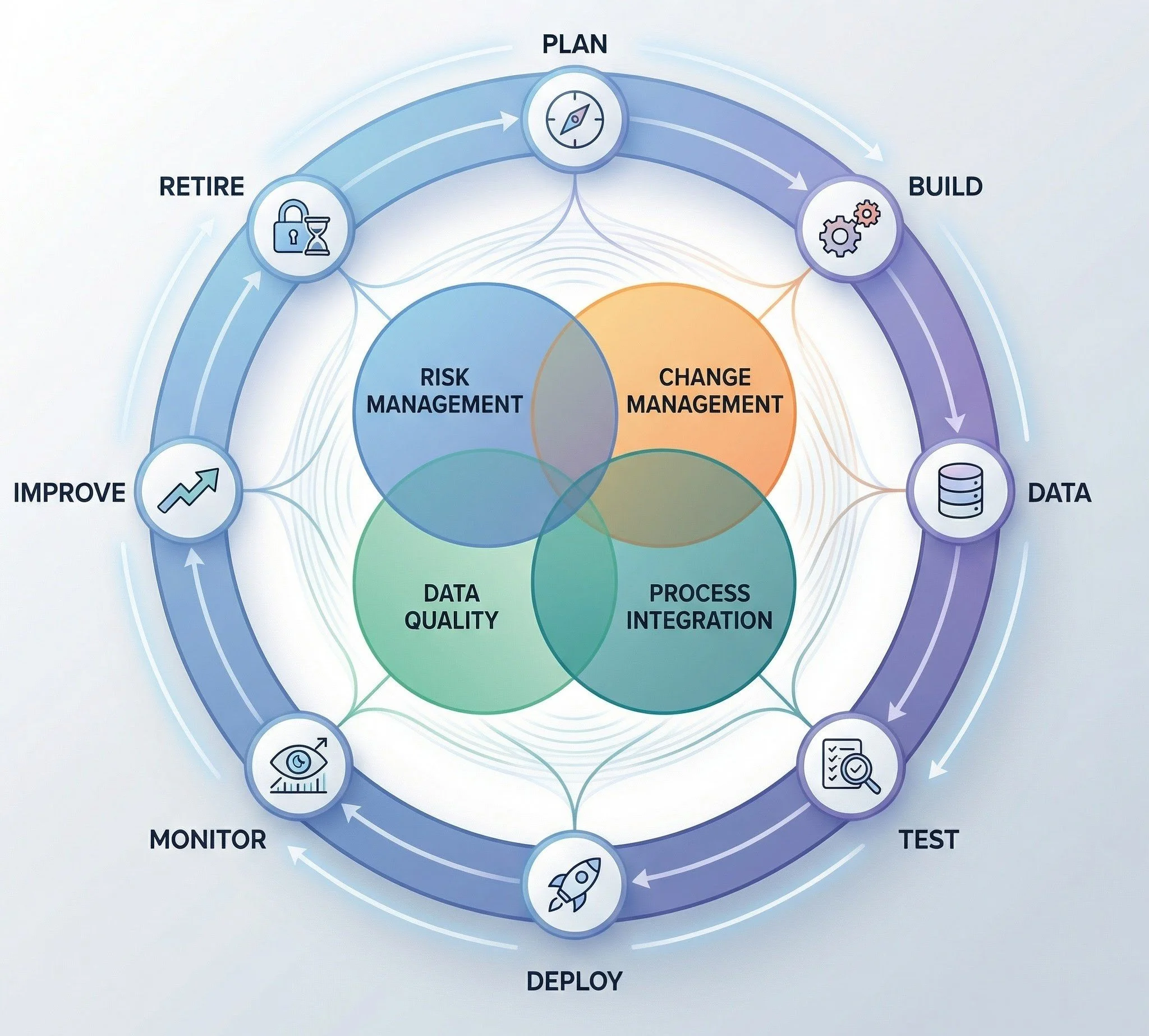

AI Life Cycle

When does governance need to happen?

Tools & Guidance

Where do you start?

Our approach is built around three principles that work together. They are not separate methodologies — they are three lenses we apply to every engagement, because good governance needs all three at once.

Establish multi-level governance accountability

Diagnose policy-to-practice gaps

Design integrated review and escalation mechanisms

Embed auditable documentation frameworks

Facilitate cross-functional governance alignment

No single AI framework can cover the full range of ethical, technical, operational, and legal needs across different domains. Such an approach helps organisations to address unique risks and specific standards of each sector, strengthening safety, accountability, and practical implementation.

Typical 3 layers in AI Governance

“These layers aren’t rigid compartments—they’re a directional way of thinking with natural overlap and interaction between them.”

-

Your organisation begins by clarifying the values and ethical principles that should guide how AI is designed, deployed, and used. This foundation establishes a shared understanding of what responsible means in your context, ensuring all decisions remain aligned with human dignity, fairness, and societal benefit.

-

This layer embeds safeguards, checks, and documentation across the entire AI lifecycle to ensure systems are safe, fair, and reliable. It provides the structures needed to assess risks, apply appropriate controls, and generate evidence that your AI is functioning responsibly over time.

-

Clear roles, responsibilities, and governance structures ensure that people—not algorithms—remain accountable for outcomes. This layer reinforces transparency, independent review, and ongoing oversight so that AI use stays aligned with organisational values and public expectations.

Below are key ethical principles that shape your commitment to building trustworthy and equitable AI systems

-

United Nations

The UN’s AI governance vision draws on several key frameworks, including Governing AI for Humanity (HLAB‑AI, 2024), the UN System White Paper on AI Governance (CEB, 2024), UNESCO’s Recommendation on the Ethics of Artificial Intelligence (2021), WHO’s Ethics and Governance of AI for Health (2021), and UNICEF’s Policy Guidance on AI for Children (2020).

-

National and Sub-national Government Efforts

Countries including the United States, Japan, South Korea, the United Kingdom, Canada, China, and India are developing diverse AI governance approaches that reflect their strengths and priorities, creating a rich global landscape of ideas and innovation.

-

Others (OECD AI Policy, Montreal Declaration etc)

Global AI ethics frameworks—such as the OECD AI Principles (promoting human‑centred, fair, transparent and accountable AI), the Montreal Declaration (emphasizing well‑being, autonomy, fairness and democratic participation)

The risk and control layer reflects the expectations of major AI governance frameworks

-

AIGA – Hourglass Model:

Designed by the University of turku, the model proposes a multilayered AI governance framework that links high‑level ethical principles into practical and verifiable mechanisms with concrete structures, roles and processes across the full AI lifecycle

-

ISO 42001

Published by the International Organization for Standardization (ISO) and the International Electrotechnical Commission (IEC), ISO/IEC 42001:2023 sets out requirements for an AI Management System to ensure responsible development and oversight of AI.

-

NIST

Developed by the U.S. National Institute of Standards and Technology (NIST), the AI Risk Management Framework provides voluntary guidelines to help organizations identify, assess, and manage AI‑related risks to support trustworthy AI.

-

IEEE 7000 Series

Developed by the Institute of Electrical and Electronics Engineers (IEEE), the IEEE 7000 standards provide structured methods for embedding human values and ethical considerations into the design of autonomous and intelligent systems.

The assurance and oversight layer reflects the need for ongoing monitoring and control over high‑risk AI systems.

-

EU AI Act

The EU AI Act establishes a risk‑based regulatory system that imposes strict obligations, including documentation, monitoring, and human oversight, on high‑risk AI systems. It requires deployers to maintain continuous risk management, transparency, and quality controls to prevent harm and safeguard fundamental rights

-

Colorado Act

The Colorado AI Act mandates annual impact assessments and bias mitigation measures for high‑risk AI systems. It strengthens accountability by requiring organisations to maintain ongoing documentation, monitoring, and risk controls to detect and reduce discriminatory outcomes.

The most common governance gap is not a missing policy. It is a transition that nobody planned for

-

Define the problem, objectives, users, and success criteria

-

Collect, manage, and prepare data

-

Develop and train the AI model or system.

-

Evaluate performance, robustness, and risks.

-

Release the system into real use

-

Track performance, behavior, and issues in operation

-

Update the system based on feedback and new data

-

Safely decommission the system when no longer needed.

Simplified tools - Start with what matters most - Build from there.

Structured, lightweight tools — assessments, templates, checklists, and guided processes can help your organisation make real progress in specific, high-priority areas

Practical AI Governance Toolkit for SMEs

Diagnostic & Scoping Tools

-

A structured self-assessment that helps organisations evaluate their AI governance, controls, oversight, and accountability practices. It provides a baseline and identifies priority gaps.

-

A catalogue of all AI systems in use — capturing purpose, vendor, data inputs, risk level, and business owner. Most SMEs underestimate how many AI tools they actually use.

-

A guided decision tree that determines whether regulations apply, what risk tier an organisation falls under, and what obligations follow. It translates complex regulation into practical action steps.

-

Defines acceptable staff use, data input rules, disclosure requirements, and when human review is mandatory. A fast, high-impact governance step.

-

A practical checklist covering bias testing, training data transparency, explainability, liability, security, and audit rights. Essential for organisations buying AI solutions.

-

Clearly defines who is Responsible, Accountable, Consulted, and Informed for AI deployment, monitoring, and incident management. Prevents governance gaps and strengthens executive oversight.

Policies, Roles & Documentation

Other Tools

-

A concise, non-technical summary of AI use, risk exposure, and governance status. Enables directors and trustees to discharge oversight responsibilities confidently.

-

A non-technical guide enabling teams to question vendors or data scientists about discrimination testing and validation practices. Empowers leadership to ask the right questions.

-

A structured approval record documenting risk assessment, control validation, and executive accountability before go-live. Creates the audit trail regulators and insurers look for.

Operational Risk Tools

-

A structured log of identified AI risks across systems, with likelihood/impact scoring and mitigation owners. Integrates AI into your broader enterprise risk management framework.

-

A step-by-step guide for the first 24–72 hours following an AI-related issue or harm event. Covers containment, documentation, communication, and regulatory escalation.

-

A structured pre-deployment evaluation that analyses how an AI system could affect individuals, operations, reputation, and compliance, ensuring potential harms are identified and mitigated before go-live.