AI Risk Management

AI risk management helps organisations understand, prioritise, and control AI‑related risks across the entire AI lifecycle, enabling responsible innovation while protecting people, systems, and organisational reputation.

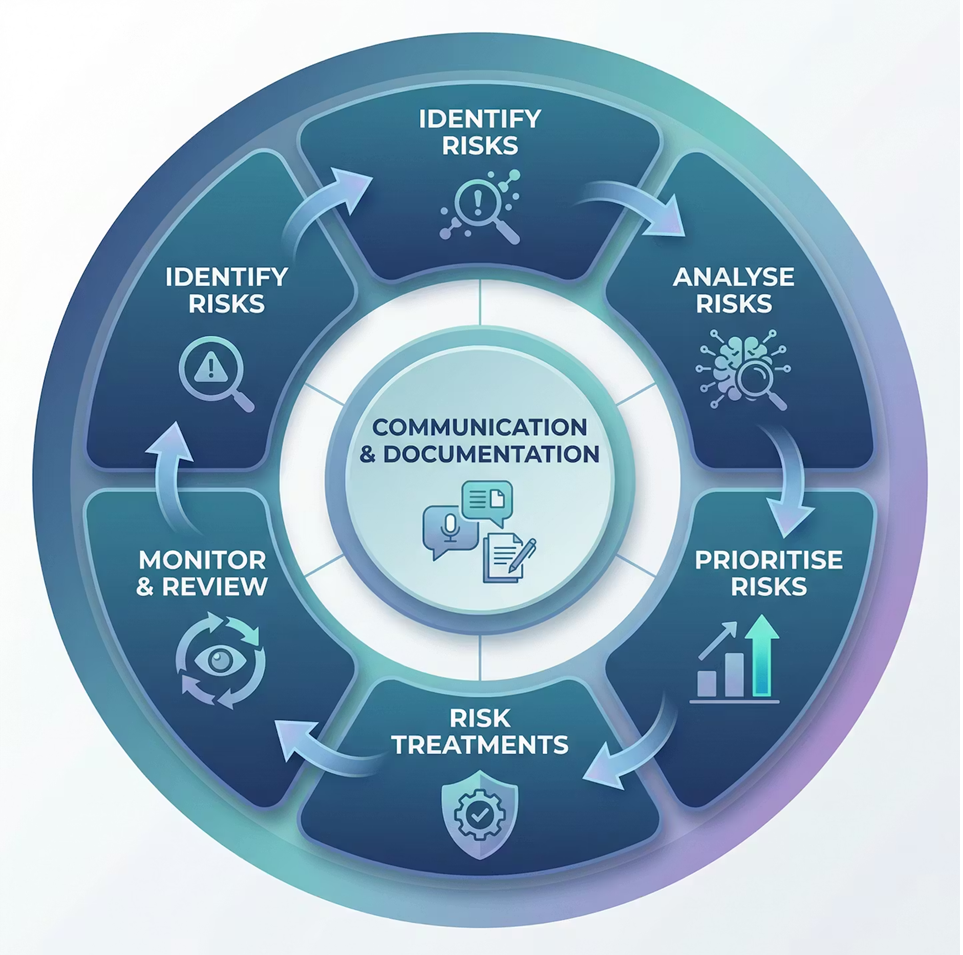

A cycle‑based approach to AI risk

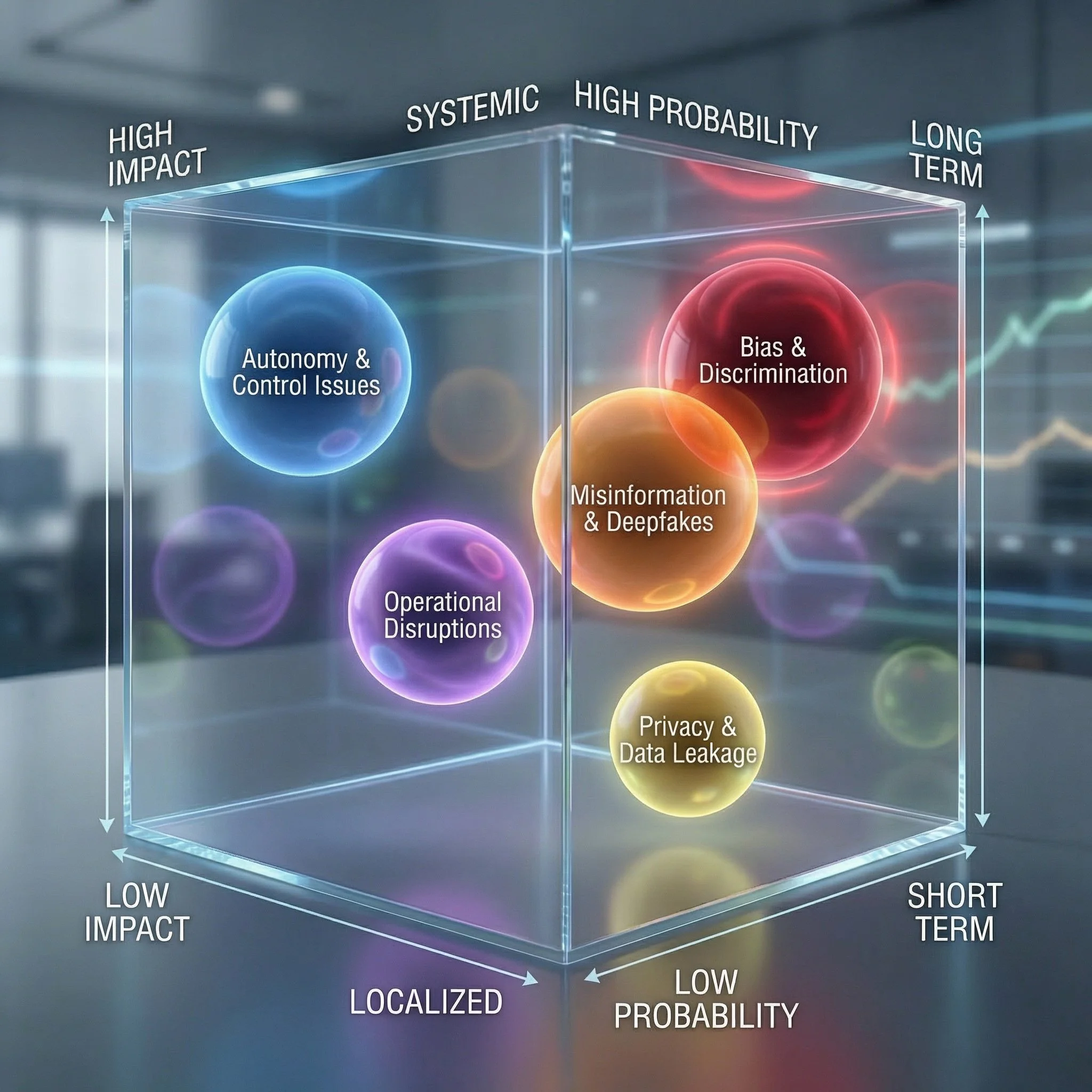

Most common risks

-

1Regulatory design challenges

-

2Opacity, explainability, and interpretability risks

-

3Challenges in tracking and monitoring decentralized AI systems

-

4Interrelated risks from data and cybersecurity

-

5Interrelated risks from copyrights and intellectual property

-

6Growing digital divide

-

7Lack of effective enforcement

-

8Disproportionate influence of non-state actors

-

9Inclusivity risks

-

10Labour force displacement and income inequality

-

11Environmental footprint of AI

What makes AI Risk Management different?

AI systems change over time

-

AI models change over time due to model drift and ongoing learning, which means their behaviour and accuracy can shift unpredictably even after deployment. This makes continuous monitoring essential, as 91% of machine learning models show performance drift over several years

AI creates new and distinct risk types

-

AI introduces unique risks such as bias, hallucinations, data poisoning, and adversarial attacks that do not exist in traditional systems. These risks can cause significant harm if left unmanaged, including discriminatory decision-making and escalated security vulnerabilities

AI systems often lack transparency

-

Many AI models operate as “black boxes,” making it difficult to understand how decisions are generated or which data influenced them. This opacity has prompted regulators to demand stronger explainability and transparency requirements for AI‑driven decisions

AI has specific regulatory requirements

-

Regulation for AI is accelerating worldwide. Frameworks like the EU AI Act impose strict compliance requirements for high‑risk AI systems—including documentation, testing, and lifecycle oversight—with penalties for non‑compliance.

AI system often face governance gaps

-

Organizations frequently deploy AI rapidly to capture value, bypassing essential safeguards such as model validation, fairness checks, and structured monitoring. These gaps increase the likelihood of compliance failures, operational errors, and reputational damage.

AI can scale and amplify harm at speed

-

Because AI systems can generate outputs autonomously and at high volume, small errors can escalate quickly into widespread issues. Generative and learning systems can also develop unpredictable behaviours, increasing the need for strong oversight and rapid intervention

Where are risks developing, growing, or shifting?

Considerations in Risk Analysis

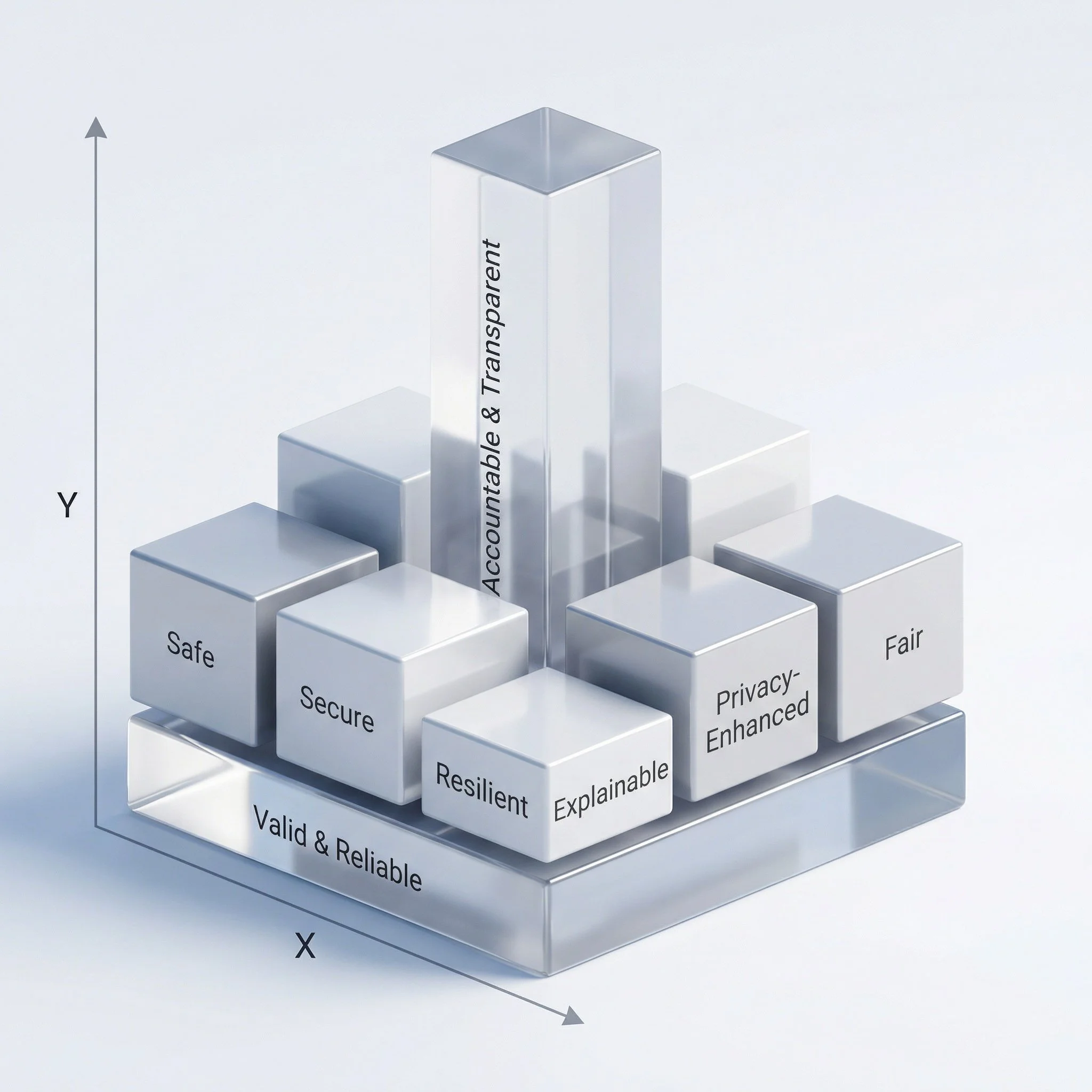

What qualities are we aiming to achieve?

At what level can potential harm occur?

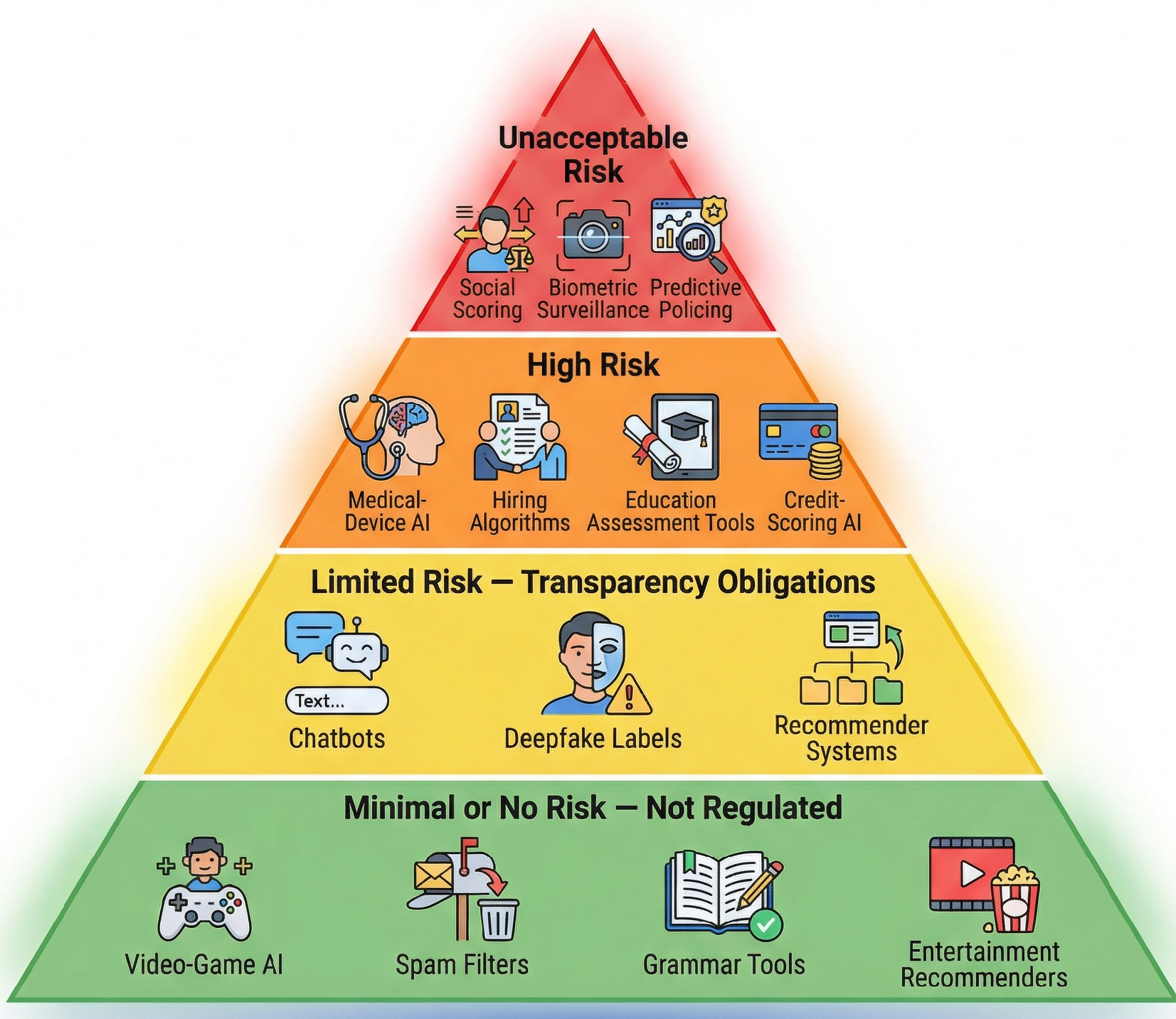

EU AI Act Risk Classification

AI systems under the EU AI Act are classified into four risk levels—unacceptable, high, limited, and minimal—each determining the regulatory obligations that apply, from outright prohibition to simple transparency requirements.

Because high‑risk systems must comply with mandatory requirements such as risk management, data governance, transparency, and human oversight, risk management sits at the core of the Act’s compliance framework

Important Tools for Risk Management

Framework Guidance :

NIST AI RMF — GOVERN + MEASURE: Establish risk tolerance, evaluate significance.

ISO/IEC 42001 — Clause 8 Controls: Determine which risks require immediate action.

EU AI Act: Provides impact‑based thresholds for high‑risk or prohibited applications.

AIGA: Encourages transparent and evidence‑based triage methods

Risk Analysis and Prioritisation

Risk Analysis and Prioritization Enablers

AI Risk Appetite & Prioritisation Criteria

Defines how much AI risk the organisation is willing to accept and what factors drive priority (human impact, scale, regulatory exposure).AI Risk Severity & Likelihood Matrix

Scores and ranks AI risks based on impact and probability.Risk Heatmaps & Visual Dashboards

Visualises and compares AI risks across use cases to support prioritisation.AI Deployment Go / No-Go Decision Framework

Translates risk analysis into clear decisions: approve, mitigate, pause, or stop.

Risk Assessment

Framework Guidance :

NIST AI RMF — GOVERN + MAP +MANAGE + MEASURE: Identify context, map risks, assess likelihood & harm.

ISO/IEC 42001 — Risk Assessment Requirements: Comprehensive, structured analysis.

EU AI Act — Article 9 Risk Management: Mandatory risk assessment for high‑risk AI.

AIGA: Human‑centric and societal impacts included in assessments

RIsk Assessment Enablers

AI Risk Category Library

(bias & fairness, safety & reliability, privacy & data protection, misuse & abuse, model drift & performance)Risk Classification Guidance

(e.g. risk tiering inspired by the EU AI Act: minimal, limited, high, unacceptable)AI Impact Assessments (AIIA)

(structured questionnaires to identify impacts, risks, and required controls)

Framework Guidance :

NIST AI RMF — MANAGE: Lifecycle monitoring, model drift detection, incident handling.

ISO/IEC 42001 — Continual Improvement & Reporting: Audit trails, performance reviews.

EU AI Act — Post‑Market Monitoring & Incident Reporting Obligations.

AIGA: Emphasises transparent performance logs and accountability mechanisms.

Monitoring and Reporting

Monitoring & Reporting enblers

AI Performance & Risk Monitoring Dashboards

Ongoing tracking of key metrics such as accuracy, bias/fairness, drift, robustness, and explainability.Incident Logging, Escalation & Reporting Framework

Standardised templates to record AI incidents, define escalation thresholds (e.g. safety events, fairness breaches, performance failures), and trigger timely action.Periodic Review, & Post-Market Monitoring Framework

Structured reviews and checklists to assess continued compliance, effectiveness of controls, and post-deployment risks

Mitigation and Control

Framework Guidance :

NIST AI RMF — MANAGE: Implement safeguards, resilience, oversight, security.

ISO/IEC 42001 — Operational Controls: Monitoring, validation, audits, human oversight.

EU AI Act — Articles 10–15: Requires mitigation controls for data, logging, human oversight, accuracy, robustness and cybersecurity.

AIGA: Advocates layered controls including technical, human, and procedural.

Mitigation enablers

Bias, Fairness & Data Quality Controls

Integrated checklists and standards to address bias, representativeness, data quality, and data suitability.Human Oversight & Accountability Design Guide

Defines where and how humans supervise AI decisions, intervene, and remain accountable

(aligned with EU AI Act human-in-the-loop requirements).Model Monitoring, Drift Detection & Mitigation Playbooks

Ongoing controls to detect performance degradation, misuse, or emerging risks, supported by scenario-based response actions.