Embed and Accelerate AI governance maturity

Embedding AI governance means integrating AI‑specific expectations directly into existing organisational structures and processes. Instead of creating parallel systems or burdensome new layers, governance becomes a natural extension of what teams already know - policies, risk frameworks, development cycles, and decision‑making routines

How embed governance so seamlessly that it feels effortless.

Organizations need to see where AI fits, focus on the changes that ensure safety and accountability,

AI Governance Maturity

An AI Governance Maturity Model empowers organizations to safely scale AI by providing a clear, structured pathway to responsible, transparent, and trustworthy AI adoption.

Where does your organization currently stand on its journey toward responsible, transparent, and well‑governed AI?”

AI Governance Maturity Model

-

AI is used in pockets with minimal policy, fragmented responsibilities, and largely undocumented, reactive handling of risks and incidents across the lifecycle

-

Some AI projects follow simple guidelines and checklists, with named owners and partial inventories, but practices remain inconsistent, undocumented, and weakly monitored across the organization

-

An organization‑wide AI policy, clear roles, standard lifecycle processes, and documentation templates are established and applied consistently, supported by a maintained inventory and basic risk classification.

-

AI governance is quantitatively managed with defined KPIs/KRIs, systematic monitoring, incident management, internal reviews, and evidence‑based adjustments to policies and controls across all key dimensions.

-

AI governance is continuously improved and externally informed, with proactive horizon scanning, lessons learned loops, advanced metrics (including fairness and impact), and a strong culture that embeds AI risk and ethics into everyday decision‑making.

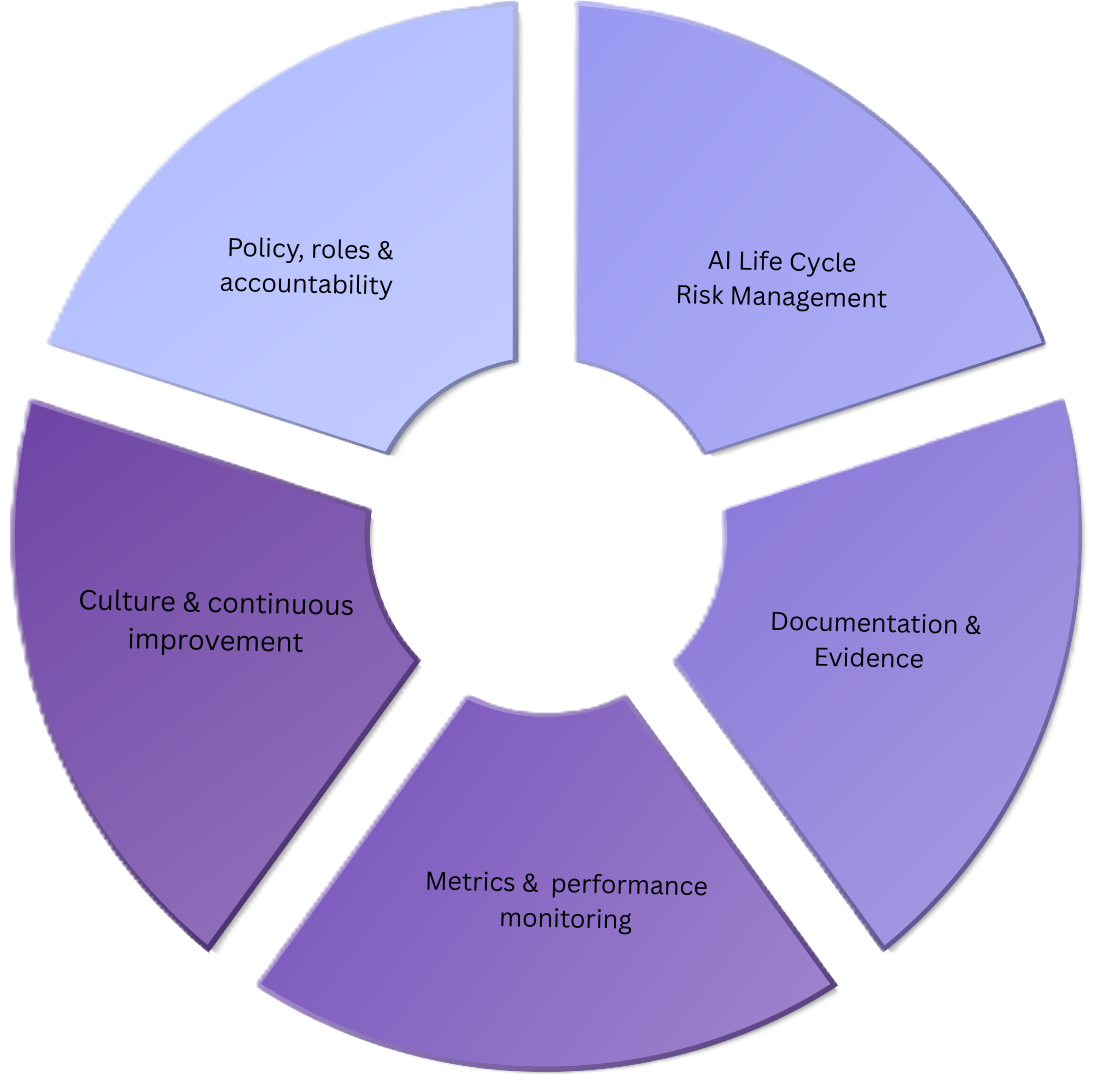

Key Components of the Model

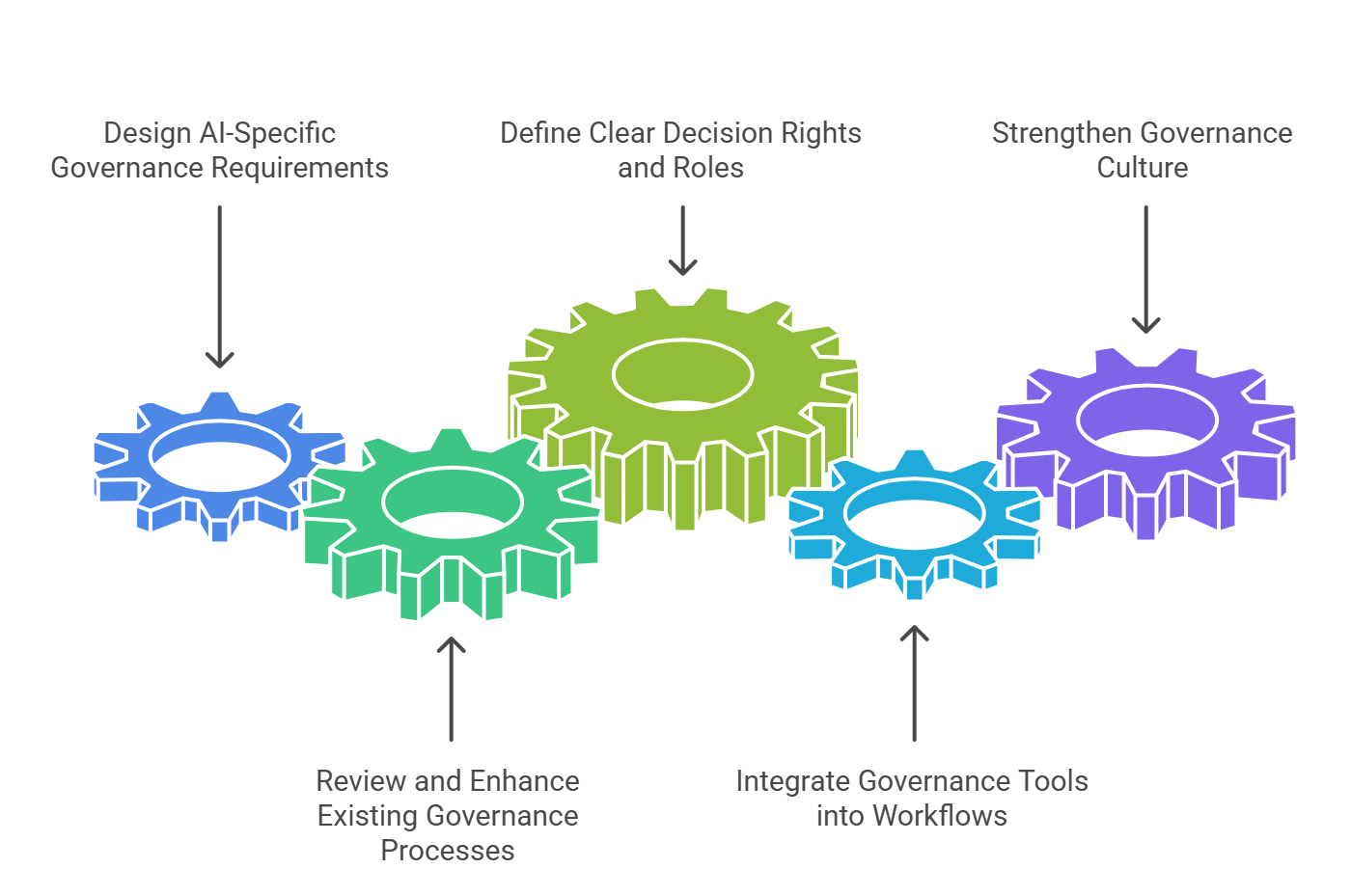

Important Tools for Embedding and Accelerating AI Governance

Framework Guidance :

EU AI Act mandates extensive technical documentation, logging, transparency, and record‑keeping for high‑risk and general‑purpose AI systems.

NIST AI RMF emphasises documentation for explainability, interpretability, and traceability.

ISO/IEC 42001 requires organisations to maintain structured documented information as part of its management system.

Documentation & Transparency Templates

Documentation enablers

AI System Overview & Design Records

Plain-language summaries explaining what the AI does, how it works, and why it was designed that way.Data & Model Documentation

Standard templates describing the data used, model characteristics, limitations, and known risks.Transparency & Disclosure Statements

Clear internal and external disclosures on AI use, impacts, and user rights.

AI Governance Policies, Roles & Decision‑Frameworks

Framework Guidance :

NIST AI RMF identifies Govern as the first pillar with clear roles and responsibilities

ISO/IEC 42001 is built around a management system with documented governance roles, organisational controls, and responsibilities.

The EU AI Act requires defined responsibilities for providers, deployers, and human oversight roles.

Governbance Enablers

AI Policy & Governance Framework

Sets organisational principles, risk expectations, scope of AI use, and alignment with laws and ethics.Roles, Responsibilities & Accountability Model (RACI)

Clearly defines ownership across the AI lifecycle, including accountability for risk, compliance, and outcomes.Decision Rights, Escalation & Governance Bodies

Establishes who can approve AI use, how decisions are made, when issues are escalated, and the role of governance committees.

Change Management

AI Lifecycle & Operational Workflow Integration

Framework Guidance:

NIST AI RMF requires applying governance functions across the full lifecycle.

ISO/IEC 42001 emphasises embedding controls into operational processes (planning, design, development, deployment, change management).

The EU AI Act requires lifecycle documentation, quality management, and process integration for high‑risk AI.

Workflow enablers

Governance Stage-Gates Across the AI Lifecycle

Clear decision checkpoints at key stages (design, development, deployment, monitoring).Built-In Templates, Checklists & Guidance

Practical tools embedded directly into project workflows to support consistent compliance.Automated Governance Triggers & Workflow Steps

Automated prompts and required actions that ensure governance is applied consistently and on time.

Framework Guidance:

NIST AI RMF requires change management as a part of life cycle coverage.

ISO/IEC 42001 requires AI controls to be embedded into operational processes, including change management.

The EU AI Act requires lifecycle documentation, quality management, and ongoing process integration for high-risk AI.

Change management enablers

Leadership Sponsorship & Role Enablement

Ensures clear ownership, accountability, and leadership backing for AI governance adoption.Training, Awareness & Capability Building

Builds understanding of AI risks, responsibilities, and governance requirements across relevant roles.Adoption, Feedback & Continuous Improvement Mechanisms

Supports behavioural change through communication, feedback loops, and ongoing refinement of governance practices.